The article directed to analytic-mature technology-savvy managers who deal with corporate IT infrastructure and strategy. The article explains quantum computing in terms relevant to IT managers and suggests future business opportunities to exploit this new technology.

The article directed to analytic-mature technology-savvy managers who deal with corporate IT infrastructure and strategy. The article explains quantum computing in terms relevant to IT managers and suggests future business opportunities to exploit this new technology.

Yet another wave will be impacting information technology (IT) globally. It is called quantum computing (QC). The good news is that IT managers do not need to do anything for another 3-5 years. However, they should now start thinking differently about QC, especially if they are part of an analytics-driven corporation. The goal of this article is to answer these questions:

- What IT managers should know about QC?

- How IT managers should plan differently for business analytics?

QC is Weird

Be prepared to think differently – very differently – about how QC works. It feels like watching an old Twilight Zone episode on the black/white TV.

After a century of upheaval in theoretical physics, scientists now conclude that quantum mechanics is more ‘natural’ than classical Newtonian physics. Fortunately for our sanity, quantum mechanics lives within its own world, which deals with the very very very small. However, being small does not imply being insignificant. Everything humans know, from finger movements on a keyboard to formation of galaxies, owes its existence to the basic properties of quantum mechanics. It is the foundation for reality as we sense it, although this foundation is beyond our intuition.

Why is QC weirdly important to an IT manager? The answer usually invokes a deep explanation of Heisenberg’s Uncertainty Principle, entanglement, superposition, coherence, and the like. If one has the time, take a semester graduate course on quantum mechanics. [2] If short on the time, then read the cheat-sheet below.

QC is All About the Qubit

To simplify, the key concept for an IT manager is the qubit, which is like the bits and bytes in classical computers. However, these qubits possess weird super-powers.

Since May 2016, the IBM Quantum Experience has offered a 5-qubit system for experimentation, with which dozens of researchers and students have availed themselves. [3] Like the early computers of the 1950’s, this system cannot support practical applications since 5-bits can only represent 32 unique states. However, unlike classical computers, 5-qubits can represent 32 states simultaneously, implying that all states are possible according to an underlying probabilistic wave function …whatever that is!

QC is like a Mass Coin Toss

Consider the analogy of a mass coin toss. Fifty persons are asked to flip coins in the air. They are pack tightly into the room and given a special coin that is numbered and unfairly bias toward either heads or tails. On 1-2-3, everyone flips their coin into the air, letting them fall on the floor. Results are tabulated for each coin.

For a moment, the spinning of all 50 coins are affected by all other coins via air currents or collisions. This is like QC entanglement. While the coins are spinning, it makes little sense to ask whether a certain coin is heads or tails. This is like QC uncertainty. Further, the coins are spinning so fast that its state is not heads or tails but a blending of heads and tails. This is like QC superposition. Finally, when the coins hit the floor, the entanglement suddenly ceases. This is like losing QC coherence, which is a critical design issue for QC.

QC has Exponential Power

As with all analogies, the mass coin toss is not true to the real situation. Here is where the weirdness starts…

In the coin toss, two coins may interact. However, two coins represent only one of 4 states (00, 01, 10, 11) while spinning. Likewise, 3 coins represent ONE of 8 states, 4 coins represent ONE of 16 states, and so on. In contrast, 3 qubits represent ALL of the 8 states simultaneously because of superposition. The point is: The n-coin system represents n bits of information, while the n-qubit system represents 2n bits of information. At first when n is small, there is little difference between the two systems. However, as n becomes large, the n-qubit system becomes exponentially more powerful to represent more complex systems.

The situation gets weirder… If they are entangled, qubits can react to changes in other qubits instantaneously regardless of their distance apart. If this entanglement is wired together as logical gates, then any dynamic system of 2n complexity can be stimulated (i.e., future behavior predicted based on current state). Further, if this wiring contains searching or optimizing logic, the discovery or enhancement of the system could be predicted. Hence, QC exponential power can also be smart.

WARNING… The power of exponential is not intuitive! Although compound interest on savings and Moore’s Law for computers are familiar examples of exponential power, most persons assume that changes in the normal world occur linearly. Further, early stages of exponential changes appear to behave linearly, but then, it’s growth outstrips human imagination! When those exponential changes explode (or collapse) in startling ways, people are astonished and assume new and unknown causes.

Given this exponential power, a QC computer will eventually out-perform totally (completely, absolutely…) any classical computer, for ‘hard’ algorithms. Researchers are predicting that this tipping point will happen, sometime over the next 3-5 years, when QC systems with 50 or more qubits are reliably commercialized.

In comparison, the number of sand grains on Earth is roughly 1019 and the number of stars in the entire universe is 1020. A 100-qubit computer (with 2100 or 1030 states) could represent, stimulate, and optimize any conceivable complex system, such as improved flow configurations across large oil refineries nationally or innovative molecular structures for new drugs for specific generic profiles.

QC is Like a Power Utility

Should an IT manager purchase a commercialized QC system? Probably not, unless you are a large government agency with a three-letter name. The likely answer is to think of using QC as a cloud service provided by an industrial-scale utility, like that of an electrical power plant.

This will differ from the usual data center, which consists of many aisles of similar server racks. The difference is that a QC system must be cooled to very close to absolute zero to support the quantum coherence needed for entanglement. There is huge number of gadgets to be thus cooled.

Also, a reliable 50-qubit system will need considerable error correction, which requires more qubits. Some estimates state a thousand times as much, implying that a reliable set of 50 logical qubits may require 50,000 physical qubits.

Hence, do not expect QC computing to be small and inexpensive …and anytime soon!

QC is Like a GPU

Another way to think about using QC is like a Graphics Processor Unit (GPU). Remember the GPU evolution and its impact on desktop computing. Initially, early processors push characters to video buffers that generate a raster scan on a TV monitor. As graphics evolved, this function was supported by a separate GPU board, providing HD resolution for text, images, and video. Think DirectX for Windows 10. More recently, new interfaces have enabled the use of GPU boards for fast matrix calculations, unrelated to graphics processing.

Likewise, QC will not replace classical computing. QC will enhance classical computing via web services for specific ‘hard’ algorithms. The good news is QC enhancements will be accessible from large mainframes to mobile smart phones. Access to these QC enhancements will be dependent on special interfaces (APIs). For example, interfaces like NVidia CUDA and OpenCL provide an interface to GPUs for fast matrix calculations.

Likewise, the IBM Quantum Experience offers an open-source thin Python wrapper QISKit that uses the HTTP API to execute OPENQASM code on IBM’s 5-qubit QC system. These QC interfaces will be the gateways for reaping the benefits of QC enhancements. [4]

When will you need these QC enhancements? Answer is: …when you need to crunch a ‘hard’ algorithm.

QC is For Hard Algorithms

What are these ‘hard’ algorithms? Like using a GPU for special 3D graphics, QC could have the potential to find solutions to problems that do not have ‘easy algorithms. The adjectives – easy and hard – imply a rough measure of the amount of resources (time, processing, storage) required to find a solution.

Computer scientists roughly grade the complexity of algorithms using the notion O(n), O(n2), or O(2n), implying that complexity increases in linear, polynomial, or exponential manner, respectively. Easy algorithms are the linear and polynomial ones, while hard algorithms are of the exponential kind.

Problems that only have hard algorithms are called NP-complete problems, where NP means “nondeterministic polynomial time”. Hard algorithms are usually avoided by finding a ‘good-enough’ algorithm that generate heuristic guesses of the optimal answer. The point is that an easy algorithm that generates an optimal solution cannot be found. Further, we cannot know that there exists any easy algorithm. This topic has an evolving literature. [5]

This is a critical distinction for companies engaging in big data analytics. An IT manager should understand resource tradeoffs when problem complexity increases. The business questions are… As the problem is scaled, how do the required resources behave? What are the impacts on time-to-solution. And, how quickly can a company take action based on the analytics.

For example, assume that a company prototypes an analytic application for a single store. When it proves useful, the corporation plans to scale the application across all stores. What will happen?

For one store, there were several thousand customers. But for all stores, there are several million customers, implying a thousand-fold increase. If the algorithm for the analytic application is linear complexity that requires 103x resources, the IT manager will probably move the application from a small server to a very large server. Problem solved! If the algorithm is polynomial that requires 106x resources (as with most analytics), the manager will spin up a hundred elastic cloud instances as needed. Problem solved! If the algorithm is exponential requiring 21000 or around 10300 times the current resources, the manager will hit the ‘exponential wall’. The problem will NEVER be solved!

An important twist to this example is… How does business value increase if quicker solutions could be found?

For example, assume that another company depends on an analytic application for a crucial business process. It currently takes more than an hour to compute, thus affecting daily business cycles. What if the company could do that same analytic in less than a second? If so, the company could embed that analytic into a smart phone app so that customers could zap product barcodes as they wander the store. That would change basic customer interactions with that company. What is the business value for this huge reduction in analytics time-to-action?

QC is For Operationalizing Analytics

As suggested, start thinking about QC of BIG business opportunities that create sustainable competitive dominance and could transform the nature of your industry. Think about making key business processes smarter. Eliminate the friction in interacting with customers and suppliers. In one click, the transaction is completed, like that of Amazon, Uber and Airbnb. The critical enabler is the analytics that operationalize those business processes. This is not your mother’s analytics, where a two-week study results in only a slide deck.

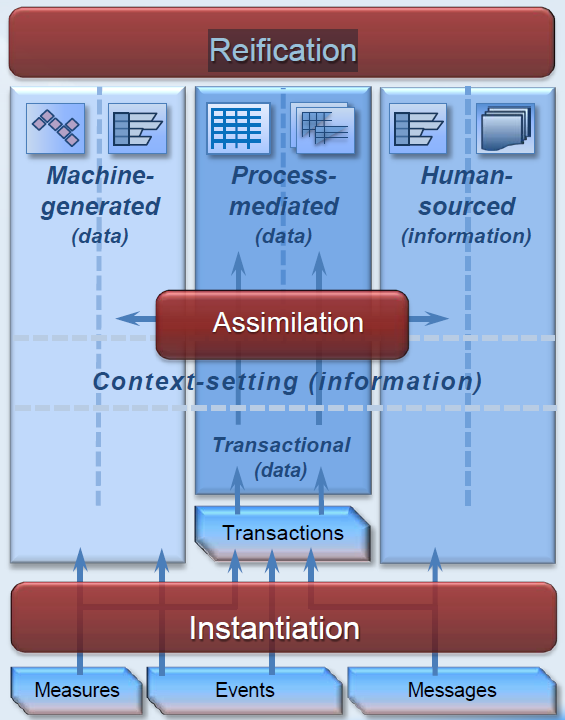

The key principle is… Analytics enables one to generalize reliability beyond known data. There is a clear distinction between known (or seen) data from unknown (or unseen) data. In its many forms, analytics analyzes known data (such as from a data warehouse or data lake) to create a model, which is used to generalize about unknown data (such as prediction of future sales revenue, likelihood of click-thru for ad impression, or recommendation of a movie or book).

The model is trained with known data. However, a portion of known data is ‘with-held’ (hidden) to validate the model. The quality of the resulting model is dependent on how carefully the data for training is kept separate from the data for validation. Once the model is trained and validated, it can be operationalized within a business processes to enhance its ‘smartness’. The effort to train a model may be difficult and costly, but once done, the execution of the model is usually quick and inexpensive.

How does this training-validating paradigm relate to QC?

For a decade, there has been a transformation of designing business processes not has a batch process with data-at-rest, but as a streaming (or continuous) processes with data-in-motion. For the older batch processes, the assumption is that models trained on previous batches are still valid for subsequent batches, which may vary in time, geography, customer segments, and product lines. Global economics have become too complex for this assumption to support one-click transactions. Companies are being forced to perform training continuously as part of processing streaming data. Hence, the time-to-action parameters for analytics are increasing and steadily exceeding the capabilities of classical computing.

QC Impacts Packaged Analytics

A company can use analytical applications in two ways: custom and packaged. A custom analytic is developed for a certain company to solve a specific business problem. The development can be accomplished in a variety of ways, although all will use the basic QC API from a conventional IDE (interactive development environment), such as using Python within a Jupyter notebook. A custom analytic will be the first emergent QC applications; however, their impacts will be limited to that company.

Substantial impacts across industries will occur when packaged analytical applications embedding QC smarts emerge for broad use cases, such as marketing campaign management and manufacturing quality control. Hence, watch for the established application vendors to be strong adopters of QC to embed hard analytic algorithms within their services.

QC Enables Knowledge Flows

From a strategic perspective, there is a ‘big shift’ in how wealth is accumulated globally. Instead of being created from knowledge stocks (such as proprietary secrets, patents, and the like), creation of wealth is shifting to knowledge flows based on a company’s ability to detect, capture, distill, and exploit many diverse data streams. [6]

Unlike current data lake strategies, wealth derived from knowledge flows is only sustainable if new knowledge is created and contributed back into the community. As Hagel, Seely Brown and Davison state in Abandon Stocks, Embrace Flows, “knowledge flows tend to concentrate among participants who are sharing with, and learning from, each other.” This implies huge computational demands from deep learning algorithms to properly digest flows and share knowledge. QC may be the only viable approach to perform this function on a global scale.

QC Action Plan

QC is yet another tech wave with high uncertainty but also high potential for certain use cases. From the perspective of an IT manager, the issues are not whether or when a commercially viable QC system is available. The key issues are:

- What are the real business impacts that people can make with QC?

- What are the huge business opportunities that QC use cases will enable?

- Is it valuable to manage critical business processes in terms of their computational complexity?

Here are practical suggestions for preparing your corporate IT strategy for the arrival of the QC utilities.

- Put QC on your 5-year radar. Alert IT strategy and planning staff to QC potential so that all can start browsing QC materials as they appear in trade publications and events. Create an alert on WSJ (and the like) for any QC articles that focus on the business implications of QC.

- Monitor current analytic applications for computation complexity. Do a complete census of your analytic apps. Watch carefully whenever an application is scaled upwards. How do the execution time, storage requirement, and I/O bandwidth change? Also, measure the time-to-action to account for end-to-end reaction time. Breathe easy if your algorithms have linear or polynomial behavior! If not, get serious about QC by investing more resources in these suggestions.

- Think about BIG opportunities for operationalizing analytics. Put your innovation hat on. Imagine that you were a customer or supplier or a widget on the assembly line. How could you make your life better and happier?

- Prepare your data science team. They are probably heads-down in too many projects. To them, QC is a distant fantasy, so go slow. Start conversations about computational complexity of machine learning algorithms and types of cloud computing upon which they have become to rely.

- Form proper technology partnerships. Start asking your strategic tech partnerships about their QC plans. If their answer is a blank stare, do not attempt to educate them. Finding partnerships where this question stimulates useful conversations. This suggestion is especially true for strategic vendors for your IT infrastructure and advanced packaged analytic applications.

- Keep an open and flexible mind. QC is weird and nonintuitive. It requires a fundamental departure from current IT concepts and technology. Just like Artificial Intelligence starting in the 1960’s, QC technology will have many ups & downs, exercising the S-shape curve multiple times. Over the coming decades, it will appear to be dead and then the greatest technology of mankind. Surf above the technology waves for this one!

Notes

[1] IBM QC announcement: The IBM announcement on March 6, 2017 contains substantive content with several interesting videos. The new Quantum API is available on this open-source github. A TED talk by Jerry Chow, IBM Manager of Experimental Quantum Computing, explained an actual qubit board in December 2015. There are substantive commentaries on IBM’s announcement from Nature, Scientific American and MIT Technology Review.

[2] QC backgrounder: The Wikipedia entry for Quantum Computing is a good starting point. Read lightly, and follow a few of the numerous links (such as the list of QC companies – lots!). Don’t laugh, but Kahn Academy has a section on Quantum Physics. It is a gentle introduction, within which a good lesson on Heisenberg uncertainty principle. Also, the latest issue of The Economist devoted its Technology Quarterly to Here, There and Everywhere article. Excellent! But be prepared for eight dense (hard-copy) pages.

[3] IBM Quantum Experience: Would you like hands-on with QC? Try the IBM Quantum Experience, which is not for the techie faint-hearted. It is amazing the amount of global attention (40K users running 275K experiments) that this site has received over the past year. It also was used as part of online curriculum for 1,800 students. The archived webinar by Lev Bishop is an excellent overview, especially the simple coding examples shown in slides 33ff in his presentation.

[4] QC interfaces: For GPU interfaces, check out this overview of Nvidia CUDA, along with Nvidia’s CUDA ecosystem resources. The open GPU interface is OpenCL hosted by the non-profit Khronos Group at this website.

[5] NP-Complete Algorithms for Machine Learning: At Wikipedia, there is an overview of NP complexity, along with several in-depth entries, starting with NP-Completeness, plus Time Complexity. Linking computational complexity to machine learning using QC was the Quantum Algorithm Zoo by Stephen Jordan of NIST, listing dozens of hard algorithms. Search page on ‘learning’ to find all the hard algorithms related to machine learning. Lots!

[6] Big Shift in Abandon Stocks, Embrace Flows: The first article about knowledge flows was Abandon Stocks, Embrace Flows in HBR, followed by The Big Shift Measuring Forces of Change also in HBR. There is now considerable academic research on this topic.

Updated

2017-04-12: add links to the IBM QISKit and Quantum Computing Tech Talk on March 29, 2017.

What are the deep questions about analytics, since big data has lost its meaning? This blog suggested a few…

What are the deep questions about analytics, since big data has lost its meaning? This blog suggested a few…